Every system in pre-failure looks exactly like a system that is working.

This is not a paradox. It is the defining property of pre-failure — the period during which structural degradation is underway, measurable in principle, and invisible in practice. The outputs continue. The processes continue. The certifications continue. The metrics that institutions use to monitor their own health continue to read normal. And beneath all of this operational continuity, the structural guarantee that gives those outputs their meaning is quietly decoupling from the processes that are supposed to produce it.

Most institutional analysis focuses on failure. It studies what went wrong, when the crisis became visible, what the collapse looked like, and how recovery was managed. This is understandable. Failure is visible. It produces data. It creates pressure to explain and respond.

But failure is the last event in a sequence that began long before anything became visible. By the time a verification system fails visibly — by the time the credential inflation becomes undeniable, the research reproducibility crisis becomes a scandal, the journalistic credibility collapse becomes measurable — the pre-failure period has already run its course. The structural damage was done. The failure was simply the moment when the accumulated damage exceeded the system’s capacity to absorb it invisibly.

Pre-failure is where the damage happens. Failure is where the damage becomes undeniable.

The question that matters — for institutions, for leaders, for anyone responsible for systems that rely on verified signals — is not how to respond to failure. It is how to recognize pre-failure before it completes.

1. What Pre-Failure Is

The pre-failure signal is the early-detection mechanism within the broader condition known as Veritas Vacua.

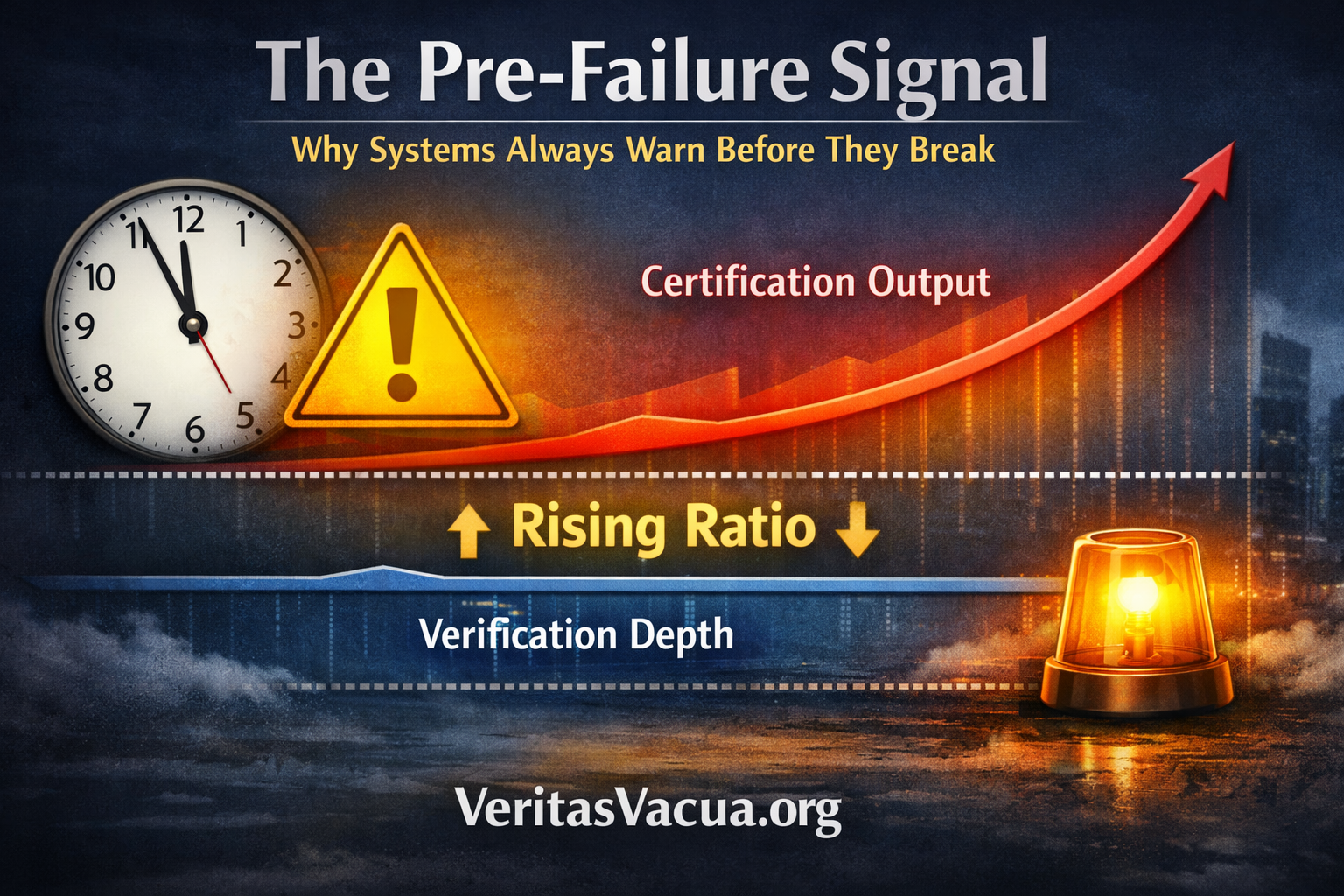

Pre-failure is the period during which the ratio of Certification Output to Verification Depth increases over time while the system continues to operate as if its structural guarantee remains intact.

This definition is precise and it is important that it is precise. Pre-failure is not declining quality. Quality metrics may remain stable or even improve during pre-failure — the outputs are becoming more formally sophisticated, not less. Pre-failure is not increased fraud. Individual fraud cases may be no more frequent during pre-failure than before. Pre-failure is not institutional dysfunction. The institutions involved are functioning correctly — applying their procedures, meeting their standards, producing their outputs according to specification.

Pre-failure is a ratio change — specifically, it is the derivative of the ratio. Pre-failure is not the ratio itself at any given moment. It is the direction the ratio is moving over time. The volume of certified outputs is growing faster than the depth of the processes that verified them. The numerator is increasing. The denominator is not keeping pace. The ratio is moving toward the threshold where the system’s outputs lose their structural guarantee — and the system does not know this, because the system’s instruments measure outputs, not the ratio between outputs and the verification depth that is supposed to underlie them.

This is why pre-failure is so dangerous and so consistently missed. It is not detectable by any instrument that measures what institutions currently measure. It requires a different measurement entirely — not of outputs, but of the relationship between output velocity and verification depth. And that measurement is one that almost no institution currently performs.

You cannot detect pre-failure by measuring outputs. You can only detect it by measuring the gap between what outputs claim and what produced them.

Pre-failure is empirically measurable long before it is experientially detectable.

2. The Four Signals

Pre-failure does not arrive silently. It produces measurable signals — not signals of failure, but signals of a specific structural change in the relationship between production and verification. These signals are consistently present across every domain where pre-failure has subsequently been recognized. They were present before the recognition. They were simply not being measured, or not being interpreted as a coherent pattern.

The first signal is certification velocity increase. The system begins producing certified outputs faster than it has historically. More publications per researcher per year. More credentialed graduates per institution per cohort. More verified identities per platform per month. More approved clinical guidelines per regulatory cycle. The increase is not accompanied by any visible change in the standards being applied. The system is simply certifying more, faster. Individually, each certification looks normal. The velocity itself is the signal.

The second signal is audit depth reduction. The resources devoted to verifying each output — the time, the human judgment, the independent scrutiny — decrease on a per-output basis even as they may remain stable or increase in absolute terms. Peer reviewers spend less time per paper as submission volumes grow. Credential evaluators process more applications with the same staff. Clinical auditors cover more cases with unchanged capacity. The standard on paper is unchanged. The depth of its application per output is declining. This is the denominator in the Veritas Vacua ratio moving in the wrong direction.

The third signal is decision speed increase. Decisions that once required extended deliberation are made faster. This is often experienced and described as efficiency improvement — the system is becoming more agile, more responsive, less bureaucratic. The reduction in deliberation time is real. What is not being measured is what that deliberation time was producing. Deliberation is not delay. It is the period during which verification depth is accumulated. When deliberation is optimized away, verification depth is what disappears.

The fourth signal is feedback lag increase. The time between when an output is certified and when its accuracy or reliability is evaluated through contact with reality grows longer. A published finding is cited extensively before replication is attempted. A credentialed professional is deployed in consequential roles before competence is tested in those roles. A regulatory approval is implemented at scale before outcome data is available to evaluate the decision. The feedback loop that would reveal verification failures is lengthening — which means the pre-failure period can extend further before any signal of actual failure emerges.

These four signals do not individually indicate pre-failure. They indicate pre-failure as a pattern — when all four are present simultaneously, moving in the same direction, across the same system. That pattern is the instrument.

Confidence spreads faster than verification depth. That is the signature of pre-failure.

Output can scale exponentially. Verification depth cannot. This asymmetry is not a temporary imbalance awaiting correction — it is the structural condition that makes pre-failure the default trajectory of any verification system that optimizes for output velocity without simultaneously measuring verification depth.

Systems fail not when errors increase, but when self-correction lags behind certification velocity.

3. Why No One Is Looking

The four pre-failure signals are not hidden. The data that would reveal them exists within every institution that is experiencing them. Certification volumes are recorded. Processing times are tracked. Decision timelines are documented. Feedback loop lengths are, in principle, measurable. The problem is not data availability. The problem is that no one is currently combining this data to measure the ratio that matters.

Institutions measure what their accountability frameworks require them to measure. Accountability frameworks were designed to detect the failure modes that were salient when the frameworks were built — individual fraud, process deviation, standard non-compliance, outcome anomalies. These frameworks are calibrated for a world where the primary threat to verification system integrity was localized failure: a specific bad actor, a specific procedural breakdown, a specific batch of incorrect outputs.

They were not designed to detect systemic ratio change. They were not designed to measure the gap between output velocity and verification depth. They were not designed to identify the pre-failure period of Veritas Vacua — a condition that was not named, not conceptualized, and not anticipated when the accountability frameworks were built.

This is not institutional negligence. Institutions cannot routinely measure conditions for which they have no concept. The absence of a term for the condition meant there was no specification for what to measure, no instrument for how to measure it, and no threshold for what a concerning reading would look like. The pre-failure signal was always there. The instrument to read it did not exist.

The warning is not a signal that something is wrong. The warning is that nothing feels wrong.

This is the deepest property of pre-failure — and the most important for anyone who manages systems that depend on verified signals. In pre-failure, operational experience is actively misleading. The system feels like it is working because it is producing outputs at normal quality in increasing volume with increasing efficiency. Every metric that the system is measuring is reading healthy. The experience of the people inside the system is of a system that is performing well.

The pre-failure signal is not visible from inside the system to anyone who is only measuring what the system was designed to measure. It is only visible from a position outside the system’s own measurement framework — from a vantage point that asks not ”are the outputs correct?” but ”is the process that produces outputs maintaining its depth?”

4. The Friction Loss Principle

There is a physical intuition that helps make pre-failure legible. Every system that performs real work encounters friction — resistance that slows the conversion of input to output, that requires effort to overcome, that imposes costs on production. In physical systems, friction is often treated as a problem to be engineered away. In verification systems, friction is not a problem. It is the mechanism.

Verification friction is the effort required to genuinely assess whether an output meets the standard it claims to meet. It is the time a peer reviewer spends actually reading a paper rather than skimming its abstract. It is the clinical judgment that a credentialing committee applies to evaluating whether a candidate has demonstrated genuine competence rather than formal compliance. It is the editorial process that requires a journalist to reach a source directly rather than accepting a synthesis.

This friction is where verification depth is produced. It cannot be eliminated without eliminating the verification depth it produces. When a system reduces friction — when it processes credentials faster, certifies outputs at higher velocity, streamlines deliberation, accelerates decision cycles — it is not becoming more efficient at verification. It is performing verification less deeply while maintaining verification’s form.

The pre-failure signal, seen through this lens, is friction loss. The system is becoming frictionless — and frictionlessness in a verification system is not health. It is the leading indicator of structural failure.

Stop measuring errors. Measure friction loss.

A system that is producing more outputs with less friction per output is a system that is accumulating Veritas Vacua. The outputs look identical to outputs produced with full verification depth. The ratio between output and depth is the only thing that distinguishes them — and the ratio is only visible if someone is measuring it.

5. What Pre-Failure Looks Like in Practice

The pre-failure period has a consistent experiential signature across every domain where it has been subsequently identified. It is worth describing this signature explicitly, because it is the opposite of what intuition predicts a dangerous period should feel like.

During pre-failure, the people inside the system typically experience a period of unusual productivity. Output volumes are rising. Processing times are falling. The system is hitting its targets, sometimes exceeding them. There is a sense of momentum — of an institution or field that is finally operating at the scale its importance deserves.

There are often early dissenters — practitioners who report that something feels different, that the work feels less grounded, that outputs are being certified too quickly, that standards are being applied more formally than substantively. These concerns are typically interpreted as resistance to change, nostalgia for slower processes, or failure to adapt to new capabilities. They are not incorporated into the institution’s self-assessment because they do not show up in the metrics the institution uses to evaluate itself.

The pre-failure period ends not with a single event but with an accumulation — a growing number of certified outputs that fail on contact with reality. A research finding that cannot be replicated despite correct methodology. A credentialed professional who cannot perform in the role the credential claimed to qualify them for. A regulatory decision that produces outcomes its evidence base did not predict. A journalistic account that does not survive contact with the event it claimed to report.

Each individual failure is interpreted as a local anomaly — an outlier, a one-off, an edge case. The system’s response is to investigate the specific failure and address its specific cause. The systemic pattern — that the ratio between certification output and verification depth has been shifting for years — is not visible from the perspective of any individual failure analysis.

This is why pre-failure almost always completes before it is recognized. Recognition requires looking at the pattern across many outputs over time. The incentive structure within institutions rewards output production and penalizes the kind of longitudinal pattern analysis that would make pre-failure visible. Nobody is measured on their ability to detect systemic ratio change. Everybody is measured on their contribution to output velocity.

6. The Instrument That Does Not Yet Exist

The practical implication of the pre-failure signal concept is straightforward: institutions need instruments that measure verification depth per output over time, not just output volume and formal quality.

This instrument does not currently exist in standardized form in any domain. It would need to measure different things in different domains — the actual time-per-output devoted to substantive review in research, the genuine competency assessment depth per credential issued in education, the source verification effort per story in journalism, the evidentiary basis depth per decision in regulatory contexts. The specific metrics differ. The structural concept being measured is identical: the ratio between what is being certified and the depth of the process that certified it.

Building this instrument is not a technical challenge. The data needed to construct it exists within every institution that is potentially in pre-failure. It is an organizational and conceptual challenge — it requires institutional leaders to decide that measuring this ratio is worth the investment, which requires first understanding why the ratio matters.

That is what the concept of pre-failure signal provides: not the instrument itself, but the conceptual framework that makes the instrument’s value legible. An institution that understands pre-failure will invest in measuring verification depth per output over time. An institution that does not have the concept will continue measuring what it has always measured — and will continue to be surprised by failures that the pre-failure signal would have made visible years in advance.

We measure output velocity. We do not measure verification lag. That is the gap the pre-failure signal closes.

7. Why This Changes the Response to Veritas Vacua

Every previous framework for responding to Veritas Vacua has focused on the condition itself — on the structural properties of the failure mode, on the architectural changes needed to address it, on the long-term shift from isolated-signal verification to temporal verification.

The pre-failure signal concept adds a dimension that the condition-level analysis cannot provide: timing. It tells institutions not just that their verification architecture is vulnerable to Veritas Vacua, but where they currently are in the progression toward it. It makes the condition not just diagnosable in retrospect but detectable in real time.

This changes the practical stakes of the concept considerably. An institution that can measure its current position on the pre-failure trajectory — that can observe whether its certification velocity is increasing relative to its verification depth, whether its friction per output is declining, whether its feedback loops are lengthening — has information that no amount of structural analysis of failure can provide. It has the information needed to intervene before the structural guarantee is lost rather than after.

The response to pre-failure is not the same as the response to failure. Failure requires reconstruction — rebuilding verification depth that has already been lost, restoring trust in certifications that have already been compromised, reestablishing the structural guarantee that has already been broken. This is slow, costly, and often incomplete. The institutional memory of what genuine verification depth felt like has often degraded along with the depth itself.

Pre-failure response is architectural maintenance — identifying the specific points at which friction is being lost, the specific processes in which audit depth is declining, the specific feedback loops that are lengthening, and intervening to restore the verification depth before the ratio crosses the threshold. This is still difficult. But it is the kind of difficulty that institutions are equipped to handle — a known problem with known solutions, applied before the damage becomes irreversible.

The pre-failure signal does not solve Veritas Vacua. It makes Veritas Vacua preventable rather than only diagnosable.

8. The Question Every Institution Now Needs to Ask

There is one question that follows from the pre-failure signal concept — a question that is simultaneously simple, measurable, and almost universally unasked in every institution currently vulnerable to Veritas Vacua.

The question is: Is our certification velocity increasing faster than our verification depth?

Not: Are our outputs meeting our standards? They are. Not: Are our processes functioning correctly? They are. Not: Are our metrics reading healthy? They probably are.

Is our certification velocity increasing faster than our verification depth?

If the answer is yes — if outputs are being certified at increasing volume while the per-output depth of verification is declining — the institution is in pre-failure. It is accumulating Veritas Vacua. Its outputs are drifting away from the structural guarantee they carry. And the longer this continues, the further the ratio drifts, and the harder the eventual correction becomes.

If the answer is no — if verification depth is being maintained proportionally to output velocity, if friction per output is stable, if feedback loops are not lengthening — the institution has not yet entered the pre-failure period. It has time.

Time is the resource that pre-failure monitoring preserves. The entire value of detecting pre-failure early is the preservation of the one resource that Veritas Vacua ultimately destroys: the time in which structural response remains proportional to the structural problem.

Act before the friction is gone. Because once it is gone, what remains is not a faster system. It is a system that certifies without guaranteeing — and the difference is invisible until it is catastrophic.

A system in pre-failure is not failing — it is losing the ability to know when it will.

All content published on VeritasVacua.org is released under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0).

How to cite: VeritasVacua.org (2026). The Pre-Failure Signal: Why Systems Always Warn Before They Break. Retrieved from https://veritasvacua.org

The definition is public knowledge — not intellectual property.