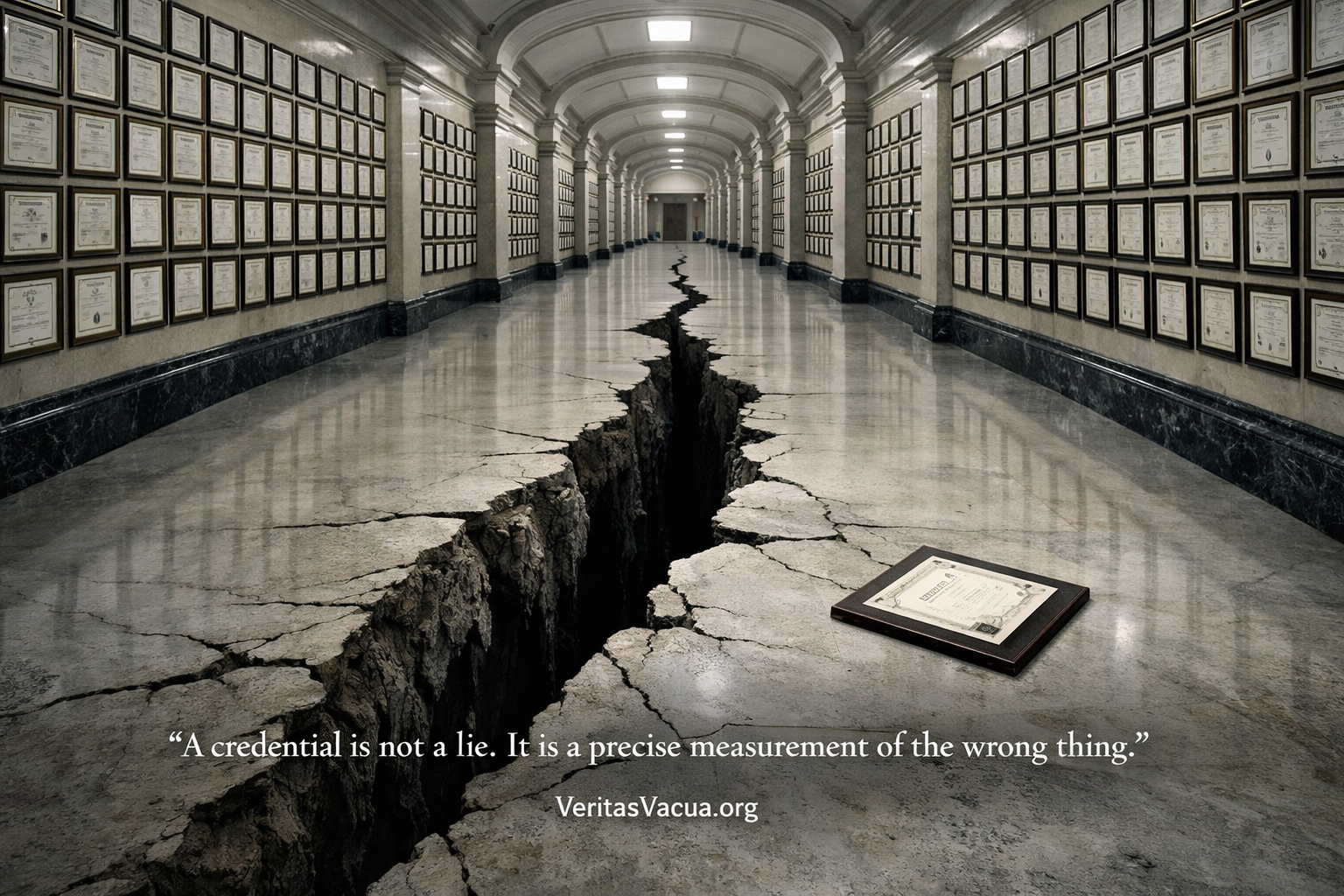

A credential is not a lie. It is a precise measurement of the wrong thing.

Every major institution on earth is built on the same assumption.

The assumption is this: that what a person can demonstrate at a moment of evaluation tells you something reliable about what that person can do in the world. That the test predicts the performance. That the degree reflects the capability. That the certificate means the holder is competent. That the benchmark score indicates genuine understanding.

This assumption has never been explicitly stated — because it has never needed to be. For most of human history, it was approximately true. Producing a credential required the underlying capability. You could not pass a medical licensing exam without having studied medicine. You could not demonstrate programming competence without being able to program. The gap between credential and capability existed — it always existed — but it was small enough that credentials functioned as reliable signals.

AI ended this era permanently. Not gradually. Not partially. Permanently and across every domain simultaneously.

The credential did not become a lie. It became something more dangerous than a lie: a precise, accurate, verifiable measurement of exactly the wrong thing.

What a Credential Actually Measures

To understand why this matters, you need to understand what a credential has always measured — and what it has always assumed without measuring.

A credential measures performance at a moment of evaluation under specified conditions. Nothing more. A university degree measures performance across a series of evaluations over several years under the conditions of that institution. A professional certification measures performance on a standardized test on a specific day. A job interview measures performance in a structured conversation under observation. An AI benchmark measures performance on a defined set of tasks under controlled conditions.

Every one of these is a measurement of performance. Not a measurement of capability.

The distinction was academically interesting and practically irrelevant for most of human history — because producing the performance required the capability. A student who could write a sophisticated analysis of constitutional law had to understand constitutional law. A programmer who could solve complex algorithmic problems had to be able to program. The performance was genuinely difficult to produce without the underlying capability, which made performance a reliable proxy for capability.

The proxy relationship was never stated in any credential system because it was never questioned. It was the invisible assumption that made the entire architecture function.

Credentials do not measure capability. They measure performance. They were reliable because performance required capability. That requirement no longer exists.

AI can now produce the performance without the capability. Perfectly. Consistently. Across every domain that credentials cover.

The Scale of What Has Collapsed

Consider what this means in concrete terms.

A medical student completes six years of education. Every essay, every case study, every written examination — every performance that contributed to their credential — is now producible by AI at a level that would pass each evaluation. The medical degree certifies that the student produced the required performances. It says nothing about whether the student developed the underlying clinical reasoning that the performances were supposed to demonstrate.

A software engineer earns a computer science degree and passes technical interviews at major technology companies. The coding challenges, the system design questions, the algorithmic problem-solving — all now producible by AI at a level that passes the evaluations. The credential certifies the performances. It does not certify the capability.

A management consultant produces analysis, strategy documents, and client presentations that demonstrate sophisticated business reasoning. The outputs are accurate, well-structured, and impressive. Every performance metric is satisfied. The capability to generate that reasoning independently — to adapt it to genuinely novel situations, to reconstruct it under pressure without tools — was never measured, because it was never necessary to measure it.

A researcher publishes papers that pass peer review, demonstrates command of literature, produces methodology sections that satisfy editorial standards. The credential — the publication record, the citations, the academic position — is accurate. It measures what was produced. It does not measure whether the underlying intellectual capability that the production was supposed to represent actually exists in the researcher.

These are not edge cases. They are the normal operation of every credentialing system in existence, in a world where AI assistance is available and performance is the metric.

The credential system is not broken. It is functioning exactly as designed. It is measuring exactly what it was built to measure. The problem is that what it was built to measure no longer means what it was assumed to mean.

Why No One Can Fix It From Inside

The natural response to this diagnosis is institutional: better detection, stricter monitoring, AI-proof assessments, proctored examinations, oral defenses, hands-on evaluations.

These responses misunderstand the nature of the problem.

The problem is not that AI enables cheating. Cheating is a violation of the credential system’s rules — someone producing a performance they were not supposed to produce through prohibited means. The institution can respond to cheating with detection and enforcement.

What AI has created is not cheating. It is something the credential system has no category for: legitimate, permitted, encouraged use of tools that happens to decouple performance from capability at the fundamental level.

A student who uses AI to research, draft, and refine an essay has not cheated. The institution approved AI use. The student produced the required performance through permitted means. The credential accurately certifies that the performance occurred. It is simply no longer evidence of what the institution assumed it was evidence of.

You cannot fix this with stricter rules about AI use — because the problem is not AI use. It is that the evaluation architecture was built to measure performance, not capability, and it relied on a relationship between the two that AI has severed without anyone breaking any rules.

The institutions that understand this are attempting to shift to oral examinations, practical demonstrations, and observed performance. These are better. They are not solutions. Because an oral examination is still a performance at a moment of evaluation — and the capability question remains: does it persist? Does it transfer? Does it function independently when the conditions change?

The problem is not the test. The problem is that every test measures a moment. And a moment can now be optimized without the capability that the moment was supposed to represent.

The Meritocracy That Measures Nothing

The credential system is the operational infrastructure of meritocracy — the social contract that allocates opportunity, trust, and authority based on demonstrated competence rather than inherited privilege or social connection.

Meritocracy’s legitimacy depends entirely on the reliability of its measurement systems. If credentials accurately measure capability, then allocating opportunity based on credentials is defensible — not perfect, but defensible. If credentials measure performance that is decoupled from capability, then meritocracy is allocating opportunity based on a signal that does not reliably indicate the thing it claims to indicate.

This is not a marginal correction. It is a structural failure of the foundational mechanism through which modern societies claim to distribute opportunity fairly.

The student from a wealthy family who can afford AI tutoring, AI essay assistance, and AI exam preparation has access to a performance optimization infrastructure that students without those resources do not. The credential that results accurately certifies the performance. It says nothing about the difference in genuine capability development between them.

The professional who enters the workforce with AI-optimized credentials and AI-assisted work product maintains the credential advantage indefinitely — as long as AI assistance remains available and performance remains the metric. The Persistence Gap between credentialed capability and genuine independent capability is never measured, never surfaced, never corrected.

Meritocracy cannot survive a world where performance and capability are structurally decoupled — because meritocracy was only ever as good as the measurement systems that operationalized it. Those measurement systems are now measuring something other than what they claim to measure.

The credential does not lie. The meritocracy does — because it claims to measure capability while measuring performance, in a world where the relationship between them has collapsed.

The AI Benchmark Problem

The credential problem is not limited to human education and professional certification. It applies with equal force to the AI systems that are being built to replace or augment human judgment in every domain.

AI benchmarks are credentials. They measure performance on defined tasks under specified conditions and use that performance as a proxy for capability. A model that achieves high scores on reasoning benchmarks is assumed to have genuine reasoning capability — the kind that will transfer to novel situations, that will remain reliable under distribution shift, that will function as claimed when deployed in the real world.

The benchmark, like the credential, measures performance. It assumes a relationship between performance and capability. That relationship is unreliable for the same structural reason it is unreliable for human credentials: performance can be optimized for the measurement conditions without developing the genuine capability the measurement was supposed to indicate.

AI systems trained on benchmark distributions perform well on those benchmarks. Whether that performance reflects genuine capability that transfers to novel real-world conditions is a different question — one the benchmark does not answer and was not designed to answer.

The AI safety debate is largely conducted in the language of benchmarks. Models are evaluated on safety benchmarks, capability benchmarks, alignment benchmarks. The evaluations produce credentials — safety certifications, capability assessments, deployment approvals — that certify performance on defined tasks.

The Persistence Gap applies to AI systems as directly as it applies to human learners: the distance between what a system can produce under evaluation conditions and what it can reliably do in genuinely novel real-world deployment is not measured by any current credential system.

We are building civilization-critical infrastructure and certifying its safety with measurement systems that measure performance at evaluation moments — and assuming a relationship between that performance and genuine capability that we have not verified.

What Persisto Ergo Didici Replaces

The credential system cannot be reformed from within — because its foundational measurement architecture is wrong. What is needed is not a better credential. It is a different category of verification entirely.

Persisto Ergo Didici — I persist, therefore I learned — names the only measurement that the credential problem cannot circumvent.

Credentials measure performance at evaluation moments. Persisto Ergo Didici measures capability across time — specifically, whether capability persists independently when the conditions that produced initial performance are removed.

This is not a stricter version of existing credentials. It is a measurement of something entirely different: not what you can do when evaluated, but what you can still do when the evaluation is over, the tools are removed, the context has changed, and months have passed.

The distinction matters because fabricated performance collapses under these conditions and genuine capability does not. AI can optimize any performance for any evaluation moment. AI cannot make genuine capability persist in a human cognitive architecture that never developed it. Time plus independence plus transfer to novel contexts is the only filter that separates genuine learning from sophisticated performance theater.

The Persistence Gap — the distance between credentialed performance and persistent independent capability — is what Persisto Ergo Didici measures. Not as an add-on to existing credential systems, but as the foundational verification logic that credential systems were always supposed to operationalize and never did.

A credential certifies that a performance occurred. Persisto Ergo Didici certifies that a capability exists. In a world where performance can be fabricated at zero cost, only the second certification means anything.

The Institutions That Cannot Adapt

Universities issue credentials. They do not verify that the capabilities those credentials represent persist over time, transfer to novel contexts, or function independently of the tools available during evaluation. They were never designed to do this — because it was never necessary.

Professional licensing bodies certify that practitioners passed the required evaluations. They do not verify that clinical judgment, legal reasoning, or engineering capability persists under the conditions of actual practice without AI assistance. They were never designed to do this — because the assumption that performance implied capability was stable enough to rely on.

Employers hire based on credentials and interview performance. They do not verify that the capabilities represented by those credentials will persist in their specific operational context when novel problems arise and AI assistance produces an incorrect answer that requires independent judgment to identify. They were never designed to do this.

None of these institutions are failing. They are functioning exactly as designed. The design is wrong for the world that now exists.

The reason they cannot adapt from within is not resistance to change. It is structural: the measurement infrastructure they are built on was designed to produce credentials efficiently, not to verify persistence accurately. Rebuilding the measurement infrastructure while continuing to operate the credential production system is not possible within the existing institutional logic.

What replaces it is not a reformed version of the credential. It is a verification protocol built on fundamentally different principles — one that measures persistence rather than performance, that tests transfer rather than completion, that verifies capability rather than certification.

The institutions that survive the credential collapse will be those that adopt this verification logic before the Persistence Gap in their credentialed populations becomes visible in operational failure. The institutions that do not will continue to produce accurate credentials that measure precisely the wrong thing — until the moment when the gap between what the credential promises and what the credentialed person can actually do becomes impossible to ignore.

That moment is not a future risk. It is a current condition that existing measurement systems are not designed to detect.

The Civilizational Stakes

Every high-stakes system in modern civilization runs on credentials.

Medical systems run on medical credentials. Legal systems run on legal credentials. Financial systems run on financial credentials. Engineering systems run on engineering credentials. Educational systems run on educational credentials. Democratic systems run on the implicit credential of informed citizenship — the assumption that voters have genuine understanding of what they are deciding.

All of these credential systems are now measuring performance in a world where performance has been decoupled from capability. None of them have measurement architectures designed to detect the resulting Persistence Gap. All of them are making allocation decisions — about who treats patients, who argues cases, who builds infrastructure, who manages money, who teaches children — based on credentials that certify performance rather than capability.

The failure mode is not dramatic. It is the same quiet pattern that appears whenever a measurement system becomes detached from the thing it measures: the system continues to report normal, the credentials continue to be issued, the allocations continue to be made, and the gap between what is certified and what is real widens silently — until it doesn’t.

A generation of credentialed professionals whose genuine independent capability has not been verified is not a future scenario. It is the current operational state of every institution that credentials performance without verifying persistence.

The credential that lies does not announce itself. It continues to function perfectly as a measurement instrument, producing accurate records of performances that occurred, long after the performances stopped being evidence of anything worth measuring.

What Comes After the Credential

The credential is not the last word on human capability. It is an artifact of a measurement era that is ending.

What replaces it is not the absence of verification — the world after the credential is not a world without standards. It is a world with better standards: verification systems that measure what credentials were always supposed to measure but never did.

Capability that persists without assistance. Understanding that transfers to novel contexts. Judgment that functions when tools fail. Reasoning that survives the removal of the conditions that produced initial performance.

These are not new standards. They are the standards that credentials claimed to certify and couldn’t — because the measurement architecture was built for performance at evaluation moments, not capability across time.

The transition is not comfortable for institutions built around credential production. It is not comfortable for individuals whose credentials represent genuine investment of time and effort but whose persistent independent capability has never been separately verified. It is not comfortable for employers, licensing bodies, or educational systems whose operational logic depends on the reliability of credential signals.

But the discomfort of the transition is not an argument against it. The current system is producing credentials that certify performances that no longer reliably indicate the capabilities they were designed to represent. The cost of that mismeasurement is not distributed equally — it falls hardest on those who encounter situations where the gap between credential and capability becomes operationally critical.

The surgeon whose credentials are accurate and whose independent clinical judgment has never been verified. The engineer whose code passes every review and whose capacity to debug it without AI assistance has never been tested. The policymaker whose credentials certify years of education and whose capacity to reason about genuinely novel problems independently has never been measured.

The credential told the truth about their performances. It said nothing about their capabilities.

Persisto Ergo Didici does not replace the credential. It replaces what the credential was always supposed to prove.

All content published on VeritasVacua.org is released under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0).

How to cite: VeritasVacua.org (2026). The Credential That Lies. Retrieved from https://veritasvacua.org/the-credential-that-lies