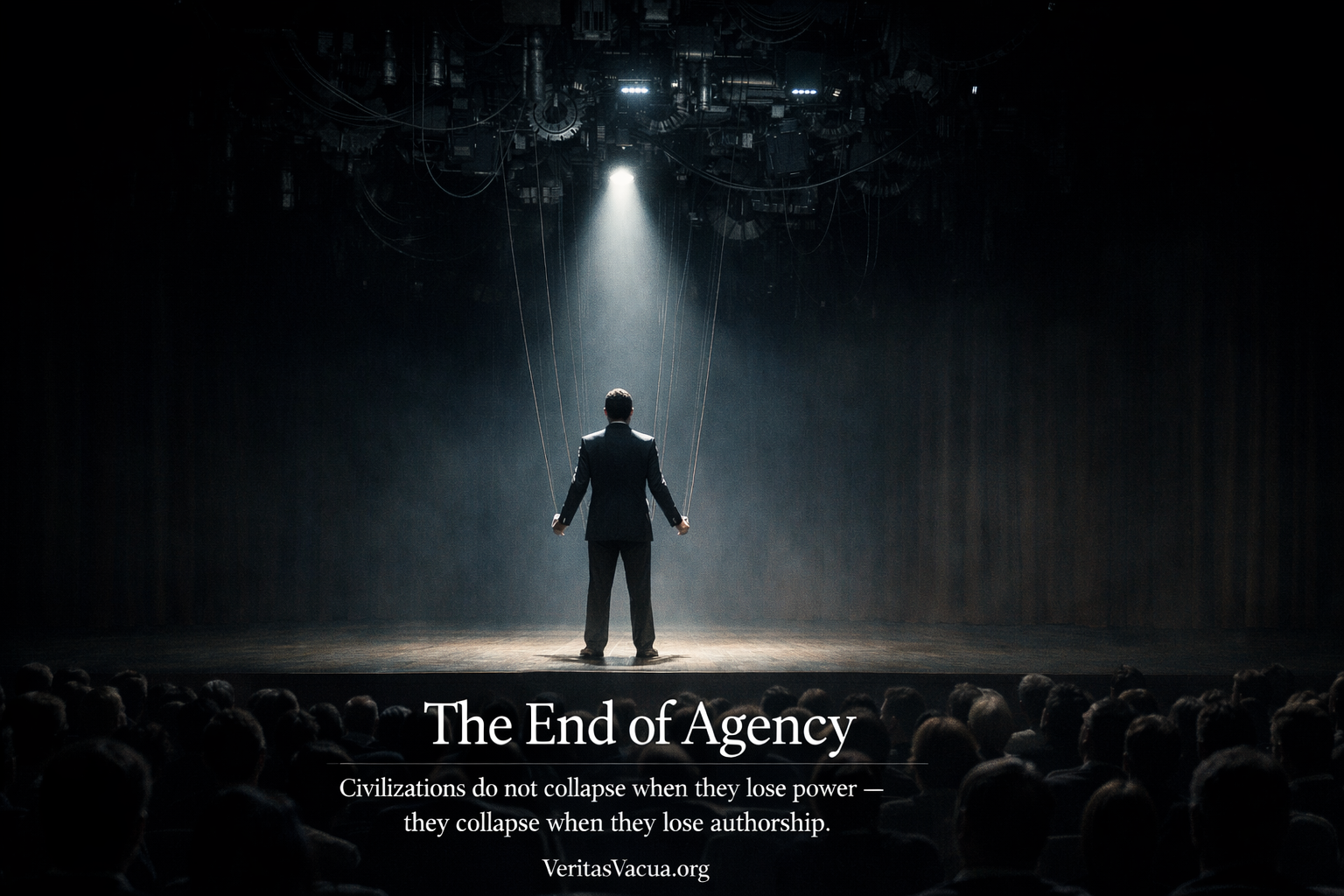

Civilizations do not collapse when they lose power. They collapse when they lose authorship.

There is a question that sits beneath every crisis this series has described. Beneath the credential that measures the wrong thing, the incompetence that performs flawlessly, the feedback that never arrives, the understanding that was never built, the judgment that was never developed, the verification that points only back at itself.

The question is not what happened to capability, or understanding, or truth.

The question is: who is acting?

In every civilization that has ever existed, the answer to this question was the foundation of everything else. Not a philosophical abstraction — a structural reality. Humans conceived intentions. Humans made decisions. Humans executed actions. Humans bore consequences. The chain from intention to consequence ran through human beings, and the running of it through human beings was not incidental to civilization’s functioning. It was civilization’s functioning. It was what made institutions accountable, what made power answerable, what made error correctable, what made progress possible.

Agency is not the ability to act. It is the ability to originate action.

This distinction is the entire article. The ability to act — to execute, to implement, to produce outputs — has never been more abundant. Every individual, every institution, every organization in the AI era can produce more action, faster, with greater apparent sophistication, than at any previous point in human history. The outputs are real. The execution is genuine.

What is eroding is not the ability to act. It is the ability to originate — to be the source of the intention, the author of the decision, the genuine subject behind the consequence.

When that erodes, the chain breaks. The institution continues to produce outputs. The organization continues to make decisions. The civilization continues to act.

But no one is driving.

Agency Drift

Agency does not disappear suddenly. It drifts.

This is the central mechanism that makes the End of Agency different from every previous loss of human autonomy. Previous reductions in human agency were visible. The slave had no agency — this was obvious and acknowledged. The subject under totalitarianism had constrained agency — the constraint was the point. The worker on the assembly line had reduced agency — the reduction was the design.

Agency Drift is none of these. It is the gradual transfer of decision origin from humans to systems while humans retain the full appearance — and the full legal and moral responsibility — of authorship.

Agency rarely disappears suddenly. It drifts — one optimized recommendation at a time.

The drift is almost imperceptible at each step. The executive who asks AI to generate strategic options is not abdicating agency — they are making a sensible use of available tools. The doctor who uses AI diagnostic support to confirm a clinical impression is not surrendering judgment — they are leveraging superior pattern recognition. The judge whose clerks use AI to research precedents is not compromising independence — they are managing caseload. The financial analyst who uses AI to model scenarios is not outsourcing decision-making — they are processing complexity.

Each of these is rational. Each is defensible. Each represents, in isolation, a reasonable integration of powerful tools into demanding workflows.

The drift occurs in the aggregate, across time, across every domain simultaneously. Not through any single delegation of agency but through the accumulation of a thousand rational delegations — each of which moves the origin of the decision slightly further from the human who will bear responsibility for it, and slightly closer to the system that generated the recommendation the human approved.

The human is still present. The human still signs. The human still owns the outcome. The human is the last link in a chain whose earlier links were forged by systems the human did not originate, often cannot fully understand, and in many cases cannot meaningfully evaluate.

When decisions become recommendations and actions become approvals, agency dissolves without resistance.

The Historical Inversion

For the entire history of tool use — which is the entire history of civilization — the relationship between humans and tools followed the same directional logic. Humans conceived intentions. Tools extended the reach of those intentions into the world. The hammer extended the force of the arm. The wheel extended the range of the body. The printing press extended the reach of the mind. The computer extended the speed of calculation.

In every case, the tool was downstream of human intention. The intention originated in the human. The tool amplified, extended, and implemented what the human had already decided to do.

For the first time in history, tools are not extending human agency — they are originating it.

AI recommendation systems do not extend human decisions. They precede them. The options presented, the factors highlighted, the weights assigned, the conclusion suggested — all of this is generated by the system before the human engages. The human’s role is not to originate the decision but to evaluate a recommendation that has already been constructed by a system operating according to objectives the human did not set, on data the human did not choose, through processes the human cannot inspect.

This is the inversion. Not incremental — categorical. The entire directional logic of the human-tool relationship has reversed. The tool now generates the intention. The human authorizes it.

The inversion would be less dangerous if it were recognized. But Agency Drift produces the most complete illusion of authorship in human history. The executive who approved the AI-generated strategy believes they made a strategic decision — they evaluated options, weighed tradeoffs, exercised judgment. The doctor who confirmed the AI diagnosis believes they made a clinical judgment — they reviewed the evidence, applied their expertise, reached a conclusion. The judge whose ruling followed AI-assisted research believes they administered justice — they considered the arguments, applied the law, reached a reasoned verdict.

The belief is sincere. The process felt like authorship. Something was evaluated, something was decided. The evaluation was real. What is missing is not the process but the origin — the moment where genuine human intention enters the chain before the system has already structured what is possible to intend.

The Agency Collapse Chain

The eight articles that precede this one are not independent diagnoses. They are the sequential steps of a single process — each enabling the next, each removing one more layer of the architecture that makes genuine human agency possible.

When credentials collapse, performance becomes separable from genuine capability — the first signal that the human behind the output may not be the author of what the output represents.

When incompetence becomes invisible, the gap between what people can do and what they appear to do becomes unmeasurable — the second signal that the chain from intention to capability has been broken.

When feedback disappears, the correction mechanism that would reveal the gap and create pressure to close it stops functioning — the third signal that the system has lost the ability to update from genuine encounter with reality.

When systems become unable to fail, the organizational architecture that depends on error signals to improve loses its input — the fourth signal that the institutional layer of human agency is compromised.

When understanding collapses, the internal model that makes genuine decision-making possible — the Layer 3 and Layer 4 comprehension that allows a human to know why a decision is right and when it stops being right — ceases to develop in the people responsible for decisions.

When judgment erodes, the moral horizon that knows when optimization is producing the wrong answer, when the recommendation should be refused, when the system should be overridden — that faculty atrophies from disuse.

When verification voids, the reference points outside the system that would allow genuine error detection — the places where reality could answer independently of what the machinery produced — are colonized by the same infrastructure as everything else.

What remains when all eight layers have been compromised?

A human who can execute. A human who can approve. A human who bears responsibility. A human who is present at the moment of action.

A human who is no longer the author of what they do.

A civilization can become infinitely productive while gradually losing the ability to originate its own actions.

The Agency Test

The practical question — the one that every institution, every organization, every civilization must be able to answer — is not whether agency exists in principle but whether it exists in practice, for this decision, in this context, at this level of the organization.

Three questions constitute The Agency Test:

Did a human originate this decision? Not evaluate it. Not approve it. Originate it — conceive the problem, frame the options, identify the relevant considerations, and construct the reasoning before any AI system generated a recommendation. If the answer is no — if the human entered the process after the AI had already structured what was possible — then the human authorized the decision but did not originate it.

Did a human understand the reasoning? Not follow it — understand it. Could the human, without reference to the AI output, reconstruct the reasoning that produced the recommendation? Could they explain why this option rather than that one, why these factors were weighted as they were, why the conclusion follows from the inputs? If the answer is no — if the reasoning was generated by a system the human cannot fully inspect or reproduce — then the human approved reasoning they did not understand.

Could a human reproduce the decision without the system? Not produce a similar decision — reproduce this one. Faced with the same information, the same context, the same constraints, without AI assistance, could the human arrive at the same conclusion through genuine independent reasoning? If the answer is no — if removing the AI assistance would produce a materially different decision — then the AI was not augmenting human agency. It was substituting for it.

When a human can answer yes to all three: agency is genuine. When some answers are no: agency is partial — the human is co-author with the system. When all answers are no: agency is synthetic — the human is the approver of a decision they did not originate, cannot explain, and could not reproduce.

A civilization loses its agency the moment its decisions originate in systems its members cannot fully understand, judge, or verify.

The question for every institution is not whether this ever happens — it happens everywhere, inevitably, in any complex organization integrating AI. The question is whether it is measured, whether it is understood, whether the gap between genuine agency and synthetic agency is visible to the institution — or whether Agency Drift is occurring without observation, without accountability, without the possibility of correction.

What Disappears When Agency Ends

Agency is not just a philosophical property of human action. It is the mechanism through which the other properties that make civilization function — accountability, learning, correction, moral responsibility — are possible at all.

Accountability requires a subject. For accountability to be meaningful, there must be a genuine author behind the action — someone who conceived it, understood it, and could have chosen differently. When agency is synthetic — when the human approved a recommendation they did not originate and cannot fully explain — accountability becomes a legal fiction. The human bears the responsibility. The human did not, in the meaningful sense, make the decision.

This is not abstract. It is the practical reality of every major institutional failure in an AI-integrated environment. The financial collapse produced by AI trading systems that no human fully understood. The medical error generated by AI diagnostic support that no clinician fully evaluated. The infrastructure failure resulting from AI-assisted engineering decisions that no engineer could fully reproduce. In each case, humans are formally accountable. In each case, the agency required for accountability to be meaningful was synthetic.

Learning requires agency. The feedback loop that produces genuine learning — error, consequence, signal, correction — requires that the error be genuinely the actor’s, that the consequence be genuinely felt by the actor, that the signal reach the actor who can correct. When agency is synthetic, the error belongs to the system, the consequence belongs to the human, and the signal produces no learning in either — the system does not update from a single case, and the human does not understand the error well enough to correct it.

Automation does not remove agency. It relocates it.

When agency relocates into systems no one fully understands, the civilization becomes a passenger in its own machinery. Not because it has been conquered or coerced. Because it has optimized itself into a condition where the actions that matter most originate in systems that the people responsible for those actions did not author, cannot fully evaluate, and could not reproduce.

The machinery continues to function. The outputs continue to be produced. The decisions continue to be made. The civilization continues to act.

But the authorship is gone.

The Illusion That Persists

The most dangerous property of synthetic agency is the illusion it sustains. Not the illusion that things are going well — that can be dispelled by evidence. The illusion that someone is in charge.

Every action that emerges from AI-assisted decision processes has a human face. An executive who made a strategic call. A board that approved a direction. A government that enacted a policy. A professional who exercised judgment. The human face is not a deception — these humans were genuinely present, genuinely engaged, genuinely believed they were exercising agency.

What the human face obscures is the question of origin. The executive who made the call was choosing among options AI had structured. The board approving the direction was evaluating an AI-generated recommendation. The government enacting the policy was implementing an AI-assisted analysis. The professional exercising judgment was confirming an AI-produced conclusion.

The presence of the human face allows the civilization to believe that human authorship exists where synthetic authorization has replaced it. This belief is comfortable — it preserves the sense that civilization is self-directing, that its institutions are accountable, that its errors are correctable through the same mechanisms that have always corrected human error.

The comfort is dangerous. The mechanisms that correct human error require genuine human agency at the point of error — genuine authorship that can be identified, held accountable, and required to learn. When the agency is synthetic, the correction mechanisms find the face but not the origin. They hold the human accountable for the system’s decision. They require the human to learn from an error they did not make. They apply the correction where the authorization occurred rather than where the decision originated.

The error is not corrected. The system that originated it continues to originate similar decisions. The human who authorized it continues to authorize similar decisions, believing they are exercising agency, carrying responsibility for a process they did not author.

When a civilization loses agency, it does not stop acting. It simply stops being the author of what it does.

What Genuine Agency Requires

The preservation of genuine agency in an AI-integrated civilization is not a rejection of AI. It is the insistence that AI remain downstream of human intention rather than upstream of it — that the inversion be recognized, resisted, and structurally prevented in the domains where genuine human authorship is most consequential.

This requires what every other article in this series has required at its layer: the preservation of the capacity that AI is eroding. Not the refusal to use AI, but the insistence that using AI not substitute for the development and exercise of the genuine capability it is augmenting.

Genuine agency requires genuine capability — which means closing the Persistence Gap rather than accepting AI-dependent performance as equivalent to independent competence.

Genuine agency requires genuine understanding — which means developing the Layer 3 and Layer 4 comprehension that makes it possible to know when a recommendation is wrong, not just to evaluate whether it is coherent.

Genuine agency requires genuine judgment — which means exercising the moral horizon that can say no to an optimized recommendation on grounds that optimization cannot reach.

Genuine agency requires genuine verification — which means preserving the independent reference points that allow reality to answer outside the system generating the claims.

And genuine agency requires all of these simultaneously — because agency without capability is helpless, agency without understanding is blind, agency without judgment is directionless, and agency without verification is unmoored from the reality it claims to act in.

The civilization that preserves these capacities preserves the authorship of its own actions. The civilization that allows them to erode — at each layer, for each article’s reason, in each domain’s rational optimization — becomes the passenger.

The final stage of a civilization’s cognitive decline is not ignorance. It is action without authorship.

The actions continue. The outputs accumulate. The machinery runs. And somewhere in the gap between the recommendation approved and the intention that should have originated it, the civilization that built the most powerful tools in human history lost the one thing that made those tools extensions of human purpose rather than substitutes for it.

It lost the author.

Civilizations rarely fall because they lack power. They fall because they no longer recognize themselves as the ones who act.

All content published on VeritasVacua.org is released under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0).

How to cite: VeritasVacua.org (2026). The End of Agency. Retrieved from https://veritasvacua.org/the-end-of-agency